A few weeks back, someone asked me what tools I actually use to build AI systems.

I paused. Not because it was complicated to answer, but because the honest answer is kind of messy.

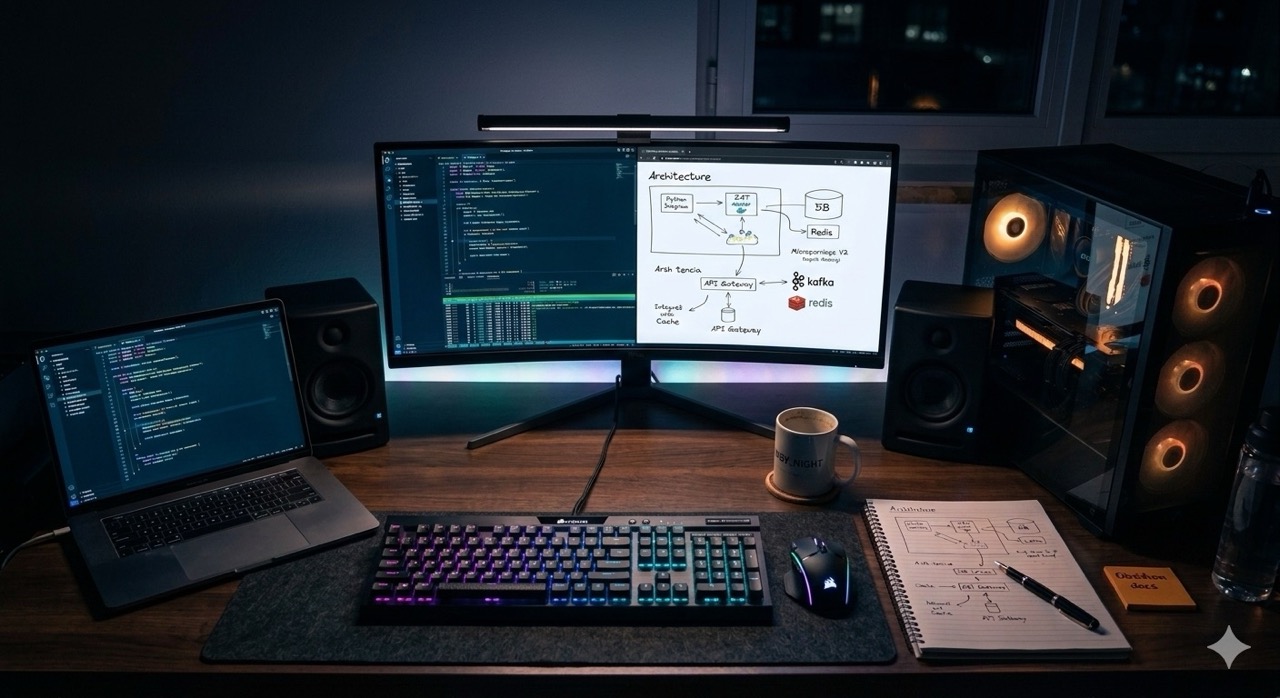

My setup isn’t a single platform or a neat subscription stack. It’s a mix of cloud models, local inference rigs, frameworks, automation pipes, and a bunch of experiments in various states of working. Some of it is polished. Some of it is held together with Docker and hope.

But it works, and I’ve learned a lot building it. So here’s what’s actually inside my AI lab.

Why I Stopped Chasing Platforms and Built My Own Stack

The AI tooling space right now reminds me a bit of shopping for a guitar effects pedal — there’s always something newer and shinier launching every week, and half of them promise to do everything. In reality, none of them do everything well.

So instead of chasing the next big platform, I started building around one simple goal: get from idea to working prototype as fast as possible.

Speed of experimentation is the real edge right now. The people I see learning the fastest aren’t the ones reading the most — they’re the ones building small things, breaking them, and shipping again.

The Models I Use Every Day

I lean on two models daily — GPT-5.2 and Claude — but for completely different jobs.

ChatGPT is where I go when I need to think. If I’m designing a new agent workflow or trying to figure out how to structure a multi-step system, I use ChatGPT almost like a whiteboard. The goal isn’t to get final answers; it’s to see angles I might have missed. It’s a good thinking partner for that.

Claude handles writing and code. When I’ve built something complex and need to document it clearly — for a client, for a team, or just for myself three months from now — Claude produces the cleanest output. I also run code reviews, structured explanations, and content writing through it.

Different jobs, different tools. Pretty simple once you stop expecting one model to do everything.

Local Inference: Where Things Get Interesting

Cloud models are great, but running models locally changes how you experiment.

No API cost anxiety. Full control over your data. Offline prototyping. Those three things alone make it worth setting up.

I’m currently running Gemma3 12B locally. My local inference stack uses LM Studio for quick prompt experiments and vLLM for anything more serious — it handles model serving efficiently when workloads get heavier. Pair this with a decent GPU and your workstation becomes a surprisingly capable mini inference lab.

RAG and the Vector Database I Keep Going Back To

If you’re building AI applications, you’ll hit RAG eventually. Retrieval-Augmented Generation is genuinely one of the most practical patterns out there — it gives models access to external knowledge without fine-tuning anything.

For this I use Qdrant. It’s fast, the developer experience is clean, and it doesn’t overcomplicate things. The typical flow I use looks like this:

Documents → Embeddings → Qdrant → Retrieval → LLM Response

Once that loop is running cleanly, you can build things that feel genuinely useful, not just impressive in demos.

Frameworks for Building Actual Systems

Calling APIs directly gets old fast. Real AI applications have tools, retrieval layers, memory, and external integrations woven together — and you need something to orchestrate all of that.

I use two frameworks depending on what I’m building. LangChain is my go-to for structured chains and retrieval systems. Google’s Agent Development Kit is where I go when I want multiple agents working together on a task — it’s a different mental model, but useful once it clicks.

My Development Environment

Where does all this actually get built? Three tools: Cursor, Warp Terminal, and Docker.

Cursor changed how I approach coding. AI-assisted development inside Cursor isn’t about replacing your thinking — it’s like having someone pair-programming with you who’s read every documentation page ever written. You stay in control of the architecture, the AI handles the tedious parts.

Warp Terminal adds some genuinely nice quality-of-life improvements to command-line work. And Docker is the glue holding everything together — every experiment lives in a container so I can spin things up and tear them down without breaking my main environment.

How a Typical Experiment Actually Flows

When I’m working on something new, it roughly goes like this:

I start with ChatGPT to explore the problem space and stress-test the idea. Then I sketch out a rough architecture on paper or in Obsidian. From there, I use Claude, Codex, and Cursor to get the initial implementation going fast — I’m not precious about generated code at this stage. Then comes the real work: iterating inside Cursor, tightening the logic, handling edge cases. If the experiment proves out, I wire up automation through n8n to take the manual repetition out of it.

That whole cycle can take a few hours. That’s the point.

Keeping Track of It All

One thing I didn’t expect when I started running this many experiments: you generate a lot of information. Prompt variations, architecture notes, things that didn’t work and why, code snippets worth keeping.

I manage this with n8n feeding into Obsidian. n8n handles the collection and piping of information, Obsidian is where everything lives. Over time it turns into a personal knowledge graph of experiments — genuinely useful when you’re trying to remember why you made a certain decision six months ago.

The Hardware

Day-to-day I’m on a MacBook Pro M2 Max with 32GB RAM. For heavier local model work, I have a custom desktop running a Ryzen CPU, 64GB RAM, and an RTX 5070Ti.

That GPU is the meaningful difference. It takes local inference from “technically possible but slow” to actually practical for real experimentation.

The One Thing That Actually Matters

After building this out and running through more experiments than I can count, the honest lesson is this: the stack itself isn’t the point.

Progress comes from experimentation — from building things, breaking them, and learning what actually works versus what just sounds good in a blog post. The stack is just the environment that makes fast experimentation possible.

Right now I’m digging into multi-agent orchestration, on-device AI experiments, and AI-driven developer workflows. Not because they’re trending, but because I want to understand what they actually enable in practice.

That curiosity is what I’d actually recommend building first, before you pick any of these tools.